Introduction

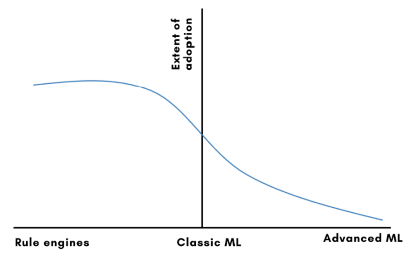

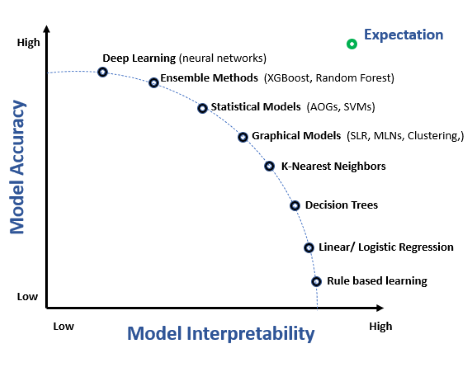

With packaged AI APIs in the market, more people are using AI than ever before, without the constraint of compute, data or R&D. This provides an easy entry point to use AI and gets users hooked for more. Financial services industry has both sides of the story - very early adopters using advanced AI techniques and late entrants who are very far from using any kind of AI. The majority of financial institutions today use some kind of AI and have strong ambitions to deploy AI sooner.

However, the first legal framework for AI is here! Proposed by European Commission, the regulation aims to develop ‘human-centric, sustainable, secure, inclusive and trustworthy AI’. One of the many mandates in the proposal is to make AI systems adhere to transparency and traceability. Moreover, the General Data Protection Regulation (GDPR) which came into force in May, 2018 also creates an obligation for companies to provide detailed explanations on how and when companies are making automated decisions about customers, and also the right to challenge these decisions. These additional requirements highlight the ever-increasing need for Explainable AI, which was discussed briefly in our previous blog.

The entry barriers to AI adoption in Financial Institutions (FIs)

AI engines built using complex techniques like Deep Learning are hard to build. The systems operations during training and inference is a highly resource intensive exercise. Once built, you need to achieve consistency and meet various metrics for being production ready. Even then, there is an apprehension in organizations in deploying these systems in the core process, since such solutions carry a somewhat justified reputation for being ‘black boxes’ characterized by poor transparency.

The most common challenges faced by FIs in AI adoption are:

- Trust and explainability - If it is not explainable and a black box - FIs would be highly reticent/cautious to use such systems

- Long time to customize - If it take very long to customize and requires long R&D - opportunity cost overrides value add

- Ease of controls - If it needs special skills to control - makes it very tough to be accept by business users

- High cost to build - If all the efforts are limited to one use case and are not reusable - ROI becomes a challenge!

There is a lot of work going on point 2 and 4, to simplify the build process of complex AI systems. But Point 1 and 2 are important and need to be solved! To ensure that the solution enjoys confidence and trust, it is important to build additional transparency layers and controls for the users.

Explainable AI:

Providing explanations is a basic requirement of an AI engine. Given the diligence and attention required for financial transactions, if users do not trust the model, they will not use it; no matter how accurate it is! Such explanations can be categorized as:

- Prediction related - how the engine arrived at the prediction

- Model related - how the model analyzed data and what it has learnt from it

- Data related - how the data is used to train the model

- Influence and controls - what can influence the system and thereby ways to controls

Explainable Artificial Intelligence models combat the opacity and unexplainable nature of neural networks. They delve into the neural network using Deep Learning and trace the values that influenced the decision. Users can comprehend the algorithm to the extent that you can predict the outcomes if the input values are changed. It allows you to trace the most profound details of the neural networks used to derive the answers.

Need for explainability:

- Enhance model performance: To debug an DL model, one needs to investigate a broad range of causes. The models have larger error space and require longer iterations, making them uniquely challenging to debug. Further, the lack of transparency leads to lower trust and inability to fix or improve the model. AI explanation will ensure that the model behaves as expected, and provide opportunities to improve model or the training data

- Investigate correlation: Traditionally ML models operate through opaque processes - you know the input and the output, but there is no explanation as to how the model reached a particular decision. Explainability brings complete transparency in the process, enabling the users to investigate correlations between various factors, and determine which factors have the most weightage in the decision.

- Enable advance controls: Transparency in the decision process can enable the user to supervise the AI model, and adjust model behavior rather than operating in the blind.

- Ensure unbiased predictions: Knowing reasons behind a particular decision prevents undesirable bias within the data

Multiple options available today

There is usually a trade-off required between accuracy and interpretability - as the AI algorithm moves towards accuracy, interpretability starts fading. The graph below shows some of the machine learning techniques mapped along with their levels of explainability and accuracy.

Technical users will be more inclined towards accuracy, consistency in performance and have longer models life cycle. Whereas business users or compliance teams would expect transparency in understanding the outcomes. An ideal explainable model will be on the upper right quadrant, where accuracy and interpretability are the highest.

With more clarity emerging on regulations in recent times, there are clear guidelines on the need for transparency, interpretability and controls! There are multiple horizontal tools aiming to assist AI experts in expedited roll outs of complex AI engines. But these explanations are either too technical or very generic! And most of them offer very few controls on AI solutions. Financial Services industry being one of the most regulated, it is important to ensure transparency and provide most comprehensive controls to prevent any large financial impacts.

Arya-XAI - Translating ‘black box DL models’ into interpretable models

At Arya.ai, we recently released a new framework - ‘Arya-XAI’ to simplify DLOps in production for financial institutions. Arya-XAI is specifically designed for financial institutions and offers most contextual explanations and controls as required by financial institutions.

Arya-XAI enhances & explains your DL model predictions, by generating new and simple interpretable explanations in real time as required by various stakeholders. Most of the current approaches use permute and predict approaches to explain black box DL models, which provide only approximation and don't explain the functionality of the network. This poses challenges to understand or explain true-to-model functioning.

Arya-XAI decodes this ‘true-to-model’ feature weightage along with node level weightages for any neural network. Thus, this framework not only improves interpretability of DL models substantially, but also offers easy to understand reasons behind the outcome.

Benefits:

Arya-XAI offers better granularity and specificity, and also:

- Provides feature importance that's comparable and combinable across the dataset, and justifies why the prediction was accurate

- Opens up the network and offers node and layer level explanations

- Offers contrastive explanations on how the variations of the features can impact the final verdict

- Monitors the model in production and informs about the model drift or data drift

- Provides more advance controls for Audit/Risk/Quality control teams to ensure the AI solution adheres to business guidelines

With Arya-XAI, the black box AI is now a little more transparent. We believe the Arya-XAI framework can act as a catalyst to move more complex AI engines into production with confidence.

Stay tuned for our next blog on the first mode operation of Arya-XAI and details about the features!